What is DStream in Spark

In this tutorial, we shall learn what is spark streaming and what is a discretized stream or DStream in Spark.

What are DStreams in Spark?

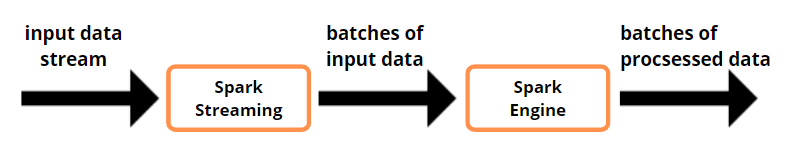

In this tutorial, we shall learn what is spark streaming and what is discretized stream or DStream in Spark. Spark Streaming is a feature of the core Spark API that allows for scalable, high-throughput, and fault-tolerant live data stream processing. Data can be ingested from a variety of sources, including Kafka, Kinesis, and TCP connections, and processed with complicated algorithms described using high-level functions like map, reduce, join, and window. Finally, data can be written to filesystems, databases, and live dashboards. Spark's machine learning and graph processing methods can even be used on data streams.

Access Snowflake Real Time Data Warehousing Project with Source Code

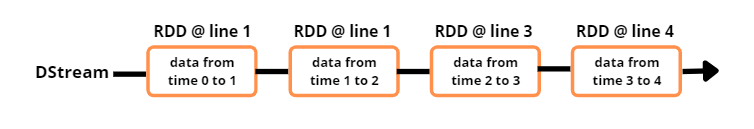

A discretized stream, or DStream, is a high-level abstraction provided by Spark Streaming that describes a continuous stream of data. DStreams can be produced by performing high-level operations on existing DStreams or by using input data streams from sources like Kafka and Kinesis. A DStream is internally represented as a succession of RDDs. A DStream's RDDs each hold data from a certain interval.

Any operation on a DStream corresponds to operations on the RDDs beneath it. The flatMap operation is executed to each RDD in the lines DStream to construct the RDDs of the words DStream in the previous example of converting a stream of lines to words.

The Spark engine calculates the underlying RDD transforms. The DStream operations mask the majority of these complexities and provide a higher-level API for developer convenience.